Brich层次聚类

参数:

threshold : float, default 0.5

- 通过合并新样本和最接近的子集群而获得的子集群的半径应小于阈值。否则,将启动一个新的子集群。将此值设置为非常低会促进分裂,反之亦然。

branching_factor : int, default 50

- 每个节点中CF子集群的最大数量。如果输入新样本,使得子集群的数量超过branching_factor,则该节点将被分为两个节点,每个子集群都将重新分配。删除了该节点的父子群集,并添加了两个新的子群集作为2个拆分节点的父节点。

n_clusters : int, instance of sklearn.cluster model, default 3

-

最后的聚类步骤后的聚簇数,该步骤将叶子中的亚簇视为新样本。

-

None : 不执行最后的聚类步骤,并且按原样返回子集群。

-

sklearn.cluster Estimator : 如果提供了模型,则该模型适合将子集群视为新样本,并且初始数据映射到最近的子集群的标签。

-

int : 模型拟合为AgglomerativeClustering,其中n_clusters设置为等于int。

compute_labels : bool, default True

- 是否为每个拟合计算标签。

copy : bool, default True

- 是否复制给定数据。如果设置为False,则初始数据将被覆盖。

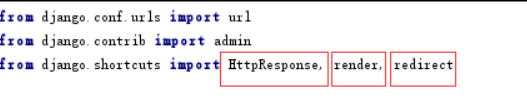

from itertools import cycle

from time import time

import numpy as np

import matplotlib as mpl

import matplotlib.colors as colors

from sklearn.preprocessing import StandardScaler

from sklearn.cluster import Birch

## 设置属性防止中文乱码

mpl.rcParams['font.sans-serif'] = [u'SimHei']

mpl.rcParams['axes.unicode_minus'] = False

import pandas as pd

%matplotlib inline

import matplotlib.pyplot as plt

data = pd.read_csv('./protein/float_int_data.csv')

data.head()

from sklearn.decomposition import PCA #主成分分析

pca = PCA(n_components=2, svd_solver='randomized') # 输出两维 PCA主成分分析抽象出维度

data = pca.fit_transform(data) # 载入N维

data[:4]

dt = pd.DataFrame(data)

X = dt

X.head()

X = X.values

1.Brich()函数只设置threshold,n_clusters,这两个参数,进行对比

print(__doc__)

from sklearn.cluster import Birch, MiniBatchKMeans

# Use all colors http://localhost:8000/notebooks/Marchine_Learning/Brich%E5%B1%82%E6%AC%A1%E8%81%9A%E7%B1%BB.ipynb#that matplotlib provides by default.

colors_ = cycle(colors.cnames.keys())

fig = plt.figure(figsize=(12, 6))

fig.subplots_adjust(left=0.04, right=0.98, bottom=0.1, top=0.9)

# Compute clustering with Birch with and without the final clustering step

# and plot.

birch_models = [Birch(threshold=1.7, n_clusters=None),

Birch(threshold=1.7, n_clusters=100)]

final_step = ['without global clustering', 'with global clustering']

for ind, (birch_model, info) in enumerate(zip(birch_models, final_step)):

t = time()

birch_model.fit(X)

time_ = time() - t

print("Birch %s as the final step took %0.2f seconds" % (

info, (time() - t)))

# Plot result

labels = birch_model.labels_

centroids = birch_model.subcluster_centers_

n_clusters = np.unique(labels).size

print("n_clusters : %d" % n_clusters)

ax = fig.add_subplot(1, 3, ind + 1)

for this_centroid, k, col in zip(centroids, range(n_clusters), colors_):

mask = labels == k

ax.scatter(X[mask, 0], X[mask, 1],

c='w', edgecolor=col, marker='.', alpha=0.5)

if birch_model.n_clusters is None:

ax.scatter(this_centroid[0], this_centroid[1], marker='+',

c='k', s=25)

ax.set_ylim([-25, 25])

ax.set_xlim([-25, 25])

ax.set_autoscaley_on(False)

ax.set_title('Birch %s' % info)

# Compute clustering with MiniBatchKMeans.

mbk = MiniBatchKMeans(init='k-means++', n_clusters=100, batch_size=100,

n_init=10, max_no_improvement=10, verbose=0,

random_state=0)

t0 = time()

mbk.fit(X)

t_mini_batch = time() - t0

print("Time taken to run MiniBatchKMeans %0.2f seconds" % t_mini_batch)

mbk_means_labels_unique = np.unique(mbk.labels_)

# Plot result

n_clusters = mbk_means_labels_unique.size

print("MiniBatchKMeans n_clusters : %d" % n_clusters)

ax = fig.add_subplot(1, 3, 3)

for this_centroid, k, col in zip(mbk.cluster_centers_,

range(n_clusters), colors_):

mask = mbk.labels_ == k

ax.scatter(X[mask, 0], X[mask, 1], marker='.',

c='w', edgecolor=col, alpha=0.5)

ax.scatter(this_centroid[0], this_centroid[1], marker='+',

c='k', s=25)

ax.set_xlim([-25, 25])

ax.set_ylim([-25, 25])

ax.set_title("MiniBatchKMeans")

ax.set_autoscaley_on(False)

plt.show()

结果如下图:

2.调参:在半径没有改变的情况下,对比设置了branching_factor参数的分类情况和没有设置branching_factor的分类情况进行对比

考虑到每个簇中样本的个数不应该固定为Brich() 默认的50个,而应该是由其聚集成的,所以将设置branching_factor=20000,样本的总数为19655,即代表没有对生成的簇中的样本个数进行限定

colors_ = cycle(colors.cnames.keys())

fig = plt.figure(figsize=(12, 6))

fig.subplots_adjust(left=0.04, right=0.98, bottom=0.1, top=0.9)

# Compute clustering with Birch with and without the final clustering step

# and plot.

birch_models = [Birch(threshold=1.7,branching_factor=20000, n_clusters=None),

Birch(threshold=1.7, n_clusters=None)]

final_step = ['without global clustering,with global branching_factor', 'without global clustering']

for ind, (birch_model, info) in enumerate(zip(birch_models, final_step)):

t = time()

birch_model.fit(X)

time_ = time() - t

print("Birch %s as the final step took %0.2f seconds" % (

info, (time() - t)))

# Plot result

labels = birch_model.labels_

centroids = birch_model.subcluster_centers_

n_clusters = np.unique(labels).size

print("n_clusters : %d" % n_clusters)

ax = fig.add_subplot(1, 2, ind + 1)

for this_centroid, k, col in zip(centroids, range(n_clusters), colors_):

mask = labels == k

ax.scatter(X[mask, 0], X[mask, 1],

c='w', edgecolor=col, marker='.', alpha=0.5)

if birch_model.n_clusters is None:

ax.scatter(this_centroid[0], this_centroid[1], marker='+',

c='k', s=25)

ax.set_ylim([-25, 25])

ax.set_xlim([-25, 25])

ax.set_autoscaley_on(False)

ax.set_title('Birch %s' % info)

结果如下图所示:

3. 继续调参:由输出结果可以看出,二者相差了将近100个簇,因此在保持branching_factor=2000的条件下,减小半径的大小,观察促进分裂的情况下分类的情况

colors_ = cycle(colors.cnames.keys())

fig = plt.figure(figsize=(12, 6))

fig.subplots_adjust(left=0.04, right=0.98, bottom=0.1, top=0.9)

# Compute clustering with Birch with and without the final clustering step

# and plot.

birch_models = [

Birch(threshold=1.3,branching_factor=20000, n_clusters=None),

Birch(threshold=0.8,branching_factor=20000, n_clusters=None)]

final_step = ['without global clustering', 'with global clustering']

for ind, (birch_model, info) in enumerate(zip(birch_models, final_step)):

t = time()

birch_model.fit(X)

time_ = time() - t

print("Birch %s as the final step took %0.2f seconds" % (

info, (time() - t)))

# Plot result

labels = birch_model.labels_

centroids = birch_model.subcluster_centers_

n_clusters = np.unique(labels).size

print("n_clusters : %d" % n_clusters)

ax = fig.add_subplot(1, 2, ind + 1)

for this_centroid, k, col in zip(centroids, range(n_clusters), colors_):

mask = labels == k

ax.scatter(X[mask, 0], X[mask, 1],

c='w', edgecolor=col, marker='.', alpha=0.5)

if birch_model.threshold >0:

ax.scatter(this_centroid[0], this_centroid[1], marker='+',

c='k', s=25)

ax.set_ylim([-25, 25])

ax.set_xlim([-25, 25])

ax.set_autoscaley_on(False)

ax.set_title('Birch %s' % info)

结果如下图所示:

4. 由输出结果可以看出,半径由1.3->0.8的过程中,二者分类相差近500个簇,因此在保持branching_factor=2000的条件下,增大半径的大小,观察减小分裂的情况下,分类的情况,

colors_ = cycle(colors.cnames.keys())

fig = plt.figure(figsize=(12, 6))

fig.subplots_adjust(left=0.04, right=0.98, bottom=0.1, top=0.9)

# Compute clustering with Birch with and without the final clustering step

# and plot.

birch_models = [

Birch(threshold=2.0,branching_factor=20000, n_clusters=None),

Birch(threshold=2.5,branching_factor=20000, n_clusters=None)]

final_step = ['without global clustering', 'with global clustering']

for ind, (birch_model, info) in enumerate(zip(birch_models, final_step)):

t = time()

birch_model.fit(X)

time_ = time() - t

print("Birch %s as the final step took %0.2f seconds" % (

info, (time() - t)))

# Plot result

labels = birch_model.labels_

centroids = birch_model.subcluster_centers_

n_clusters = np.unique(labels).size

print("n_clusters : %d" % n_clusters)

ax = fig.add_subplot(1, 2, ind + 1)

for this_centroid, k, col in zip(centroids, range(n_clusters), colors_):

mask = labels == k

ax.scatter(X[mask, 0], X[mask, 1],

c='w', edgecolor=col, marker='.', alpha=0.5)

if birch_model.threshold >=2.0:

ax.scatter(this_centroid[0], this_centroid[1], marker='+',

c='k', s=25)

ax.set_ylim([-25, 25])

ax.set_xlim([-25, 25])

ax.set_autoscaley_on(False)

ax.set_title('Birch %s' % info)

结果如下图所示:

5. 如果要继续减小分类的个数,就要继续增大半径的大小,接下来将半径设置为3.0和3.5,再次观察分类的个数

colors_ = cycle(colors.cnames.keys())

fig = plt.figure(figsize=(12, 6))

fig.subplots_adjust(left=0.04, right=0.98, bottom=0.1, top=0.9)

# Compute clustering with Birch with and without the final clustering step

# and plot.

birch_models = [

Birch(threshold=3.0,branching_factor=20000, n_clusters=None),

Birch(threshold=3.5,branching_factor=20000, n_clusters=None)]

final_step = ['without global clustering', 'with global clustering']

for ind, (birch_model, info) in enumerate(zip(birch_models, final_step)):

t = time()

birch_model.fit(X)

time_ = time() - t

print("Birch %s as the final step took %0.2f seconds" % (

info, (time() - t)))

# Plot result

labels = birch_model.labels_

centroids = birch_model.subcluster_centers_

n_clusters = np.unique(labels).size

print("n_clusters : %d" % n_clusters)

ax = fig.add_subplot(1, 2, ind + 1)

for this_centroid, k, col in zip(centroids, range(n_clusters), colors_):

mask = labels == k

ax.scatter(X[mask, 0], X[mask, 1],

c='w', edgecolor=col, marker='.', alpha=0.5)

if birch_model.threshold >=2.0:

ax.scatter(this_centroid[0], this_centroid[1], marker='+',

c='k', s=25)

ax.set_ylim([-25, 25])

ax.set_xlim([-25, 25])

ax.set_autoscaley_on(False)

ax.set_title('Birch %s' % info)

结果如下图所示: